AI on the virtual stage

Posted on May 10, 2026 by Admin

Artificial intelligence is transforming film and television production, and creeping inside the LED volumes of virtual studios too. While still a supporting player, AI is increasingly being used to streamline workflows and solve production bottlenecks, but not to replace real-world filmmaking

Words Adrian Pennington

Quite Brilliant, a virtual production studio in London, has begun to augment its workflows with AI tools. “Traditionally in virtual production we’ve used 3D games engines like Unreal,” says Russ Shaw, the studio’s VP supervisor. “More recently, tools like Chaos Arena, which is a CGI-based backend using V-Ray, produces more realistic imagery. Even then, you still don’t get the exact realism you would from shooting a real plate or having a genuine environment behind the actors.”

AI sits in between. He says, “It can create convincing imagery quickly and cost-effectively. You’re not getting full 3D parallax, but you are getting something that looks far more realistic than earlier game-engine approaches.

“It’s really about delivering more for less,” Shaw says. “Clients want high production value, but they also want faster turnaround and tighter budgets. AI helps us meet those expectations.”

In advertising production, where speed is crucial, the time savings can be significant. Quite Brilliant recently used AI-generated environments for a campaign linked to ITV’s The Birthday Draw, enabling the team to film multiple locations within a single studio shoot.

“That project involved 15 different set-ups over five days,” Shaw says. “We could jump from a beach environment to a suburban street very quickly because the backgrounds were generated digitally.”

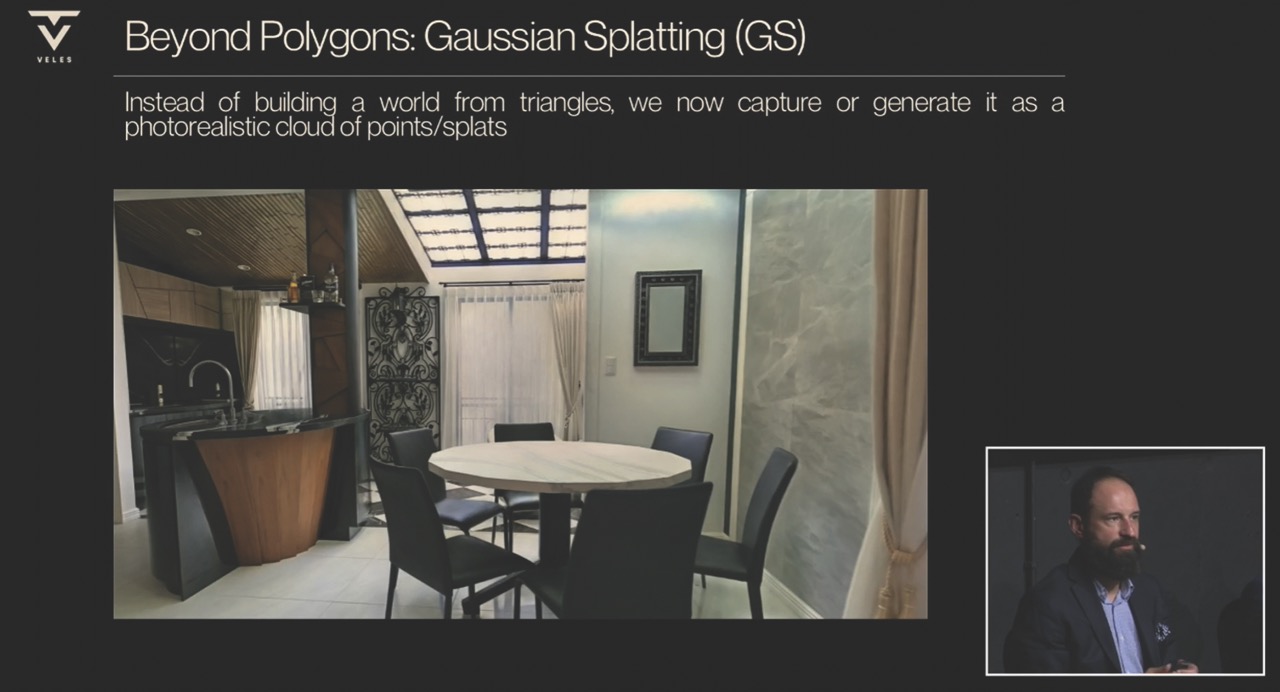

Gaussian splats evolve

Veles Productions, a Warsaw-based VP services company, estimates that certain virtual sets can now be produced at a fraction of traditional costs. What once needed weeks of labour can now be achieved in hours – or even minutes – with iterative prompting.

“This is not about saving a bit of time,” says CEO Artur Paprocki. “It’s an absolute game changer. If something is ten times cheaper, there just isn’t an argument any more.”

At the centre of this shift is Gaussian splatting, a technique that reconstructs 3D environments using dense point clouds derived from images or scans. Unlike traditional polygon-based modelling, it allows for fast, relatively lightweight scene generation with convincing depth and parallax.

“Current AI tools are not without flaws,” he says. “There are hallucinations, perspective inconsistencies and limited animation and interactivity.

Yet progress is happening at an extraordinary pace. “Tools that didn’t exist in December are available now,” notes Martynian Rozwadowski, Veles’ head of technology. “Editing, relighting, moving objects – it’s all improving.”

The comparison to early generative AI models is telling.

“With Gaussian splatting, we are somewhere between ChatGPT 2 and 3.5,” Paprocki says. The latest version is 5.2. “But it’s moving fast.”

Last year, Veles used 3D model Marble to generate Gaussian splats from a text prompt. It was believed to be a world first. Veles had used the technique with Unreal Engine and Veles’ virtual production stage to create a pop video for Polish singer Naczzos.

Now the studio has begun to phase Unreal Engine out of its pipeline. “If you build a scene in Unreal, you need the entire pipeline – modelling, texturing, lighting, optimisation,” says Paprocki. “That’s extremely expensive. Compared to prompting a model and placing it directly into the scene, it’s a completely different scale.”

New hybrid workflow

Convincing clients to invest in full Unreal pipelines is becoming more and more difficult when AI alternatives are so readily accessible.

“Sometimes the director doesn’t even want a full 3D scene,” explains Rozwadowski. “They just want a plate, a background image or simple animation. That can be AI-generated very easily.”

Rather than replacing existing methods outright, Veles see the future as a hybrid of both physical and virtual elements. In the foreground will be real sets and actors, but in the midground will be traditional 3D environments (eg Unreal Engine) and AI-generated Gaussian splat backdrops.

“If you want interaction, use real sets or 3D,” Paprocki says. “If you want cheap, flexible backgrounds with parallax, use Gaussian splatting. The approach is still evolving – but it represents a practical bridge between established and emerging technologies.”

The copyright Wild West

The legal framework surrounding AI-generated environments is uncertain. Paprocki likens it to a ‘wild west’.

When scanning real locations, the existing rules still apply. Permissions may be required, particularly for recognisable buildings or protected spaces. However, AI-generated environments occupy a grey area.

“If we reproduce a location by scanning, we follow the rules,” he says. “But when AI generates a space from a prompt – how do copyrights even exist in that situation?”

For now, the company has taken a pragmatic stance: act first, respond later. “If I am able to do it, I do it. If somebody has concerns, then we deal with it. It’s too early to define strict rules.”

It is a position which highlights broader industry ambiguity, as legal systems struggle to keep pace with generative technologies.

“Many brands are concerned about how AI models are trained, so we often ‘sandbox’ the system,” Shaw explains. “Inputs and outputs stay private so the model is only trained on material we provide, rather than scraping data from the internet.

“We can’t just type prompts like: ‘Use a Canon 25mm lens and make the background look like X location.’ You have to be careful about training data, intellectual property and how the generated image might feed back into future training datasets.”

One technique involves combining AI-generated textures with traditional 3D modelling. Artists first create simple ‘blockout’ scenes – basic geometric shapes that define the layout of buildings, streets or landscapes.

AI is used to generate visual surfaces for the shapes, like brickwork or vegetation.

“The structure of the scene still comes from the creative team,” Shaw says. “AI is essentially just skinning it.”

“We also create mood boards and train models on specific references. If we’re working on a car, for example, we’ll feed the system detailed images of headlights, bodywork and other elements so it understands that particular model.”

Some AI providers like Runway offer licensed enterprise versions with IP protection and claim its outputs are copyright-protected. “In theory that is great, but it’s difficult to know how robust that protection really is especially when models may include international components or opaque training sources.”

AI expertise in demand

Integration of AI into studio environments means studios need to equip themselves with specialists. “It’s a real skill,” says Shaw. “There are dozens of wrappers and platforms where models can run.”

Models such as Google’s AI image editor Nano Banana might appear inside other software packages such as Higgsfield or Runway, so trying to use them effectively is a challenge. “Everything is evolving extremely quickly and the quality differences between them can be dramatic.”

The Veles team is candid: some roles will disappear. “If you love working in Unreal, you might have a problem,” he says. “But if you love making films, you will find a way.”

Interestingly, the team has observed that younger creatives are not always the quickest to adapt.

“We expected young people to lead this,” he shares. “But often it’s mid-career professionals – people with previous experience – who benefit the most. These filmmakers bring narrative expertise and are able to leverage AI as a tool rather than resist it.”

Taking a look at AI worldbuilding and physical lighting

When we talk about using AI-generated worlds with physical lighting, we’re really talking about a pipeline where AI provides the structure and intent, and the lighting department then turns that intent into something physically and photographically correct.

“The workflow starts much earlier than people think,” Russ Shaw says. “We don’t necessarily just jump straight

into AI imagery by using prompts (perhaps for storyboards), but we begin the process with 3D blockouts.”

These are usually simple geometries with rough architecture, basic horizon lines, proxy props and even just cubes and planes standing in for major shapes. Blockouts deliver true spatial relationships, for example, where light would fall, where shadows land, where occlusion might occur.

“We take the 3D layout and feed it into an AI model that can ‘skin’ the blockout, which is essentially texturing it, detailing it, proposing materials, weathering, atmospheric effects, colour grades, vegetation patterns and surface detail,” Shaw explains. “This keeps the structure grounded while letting the AI generate the aesthetic layer.

Alternatively, a storyboard can provide a visual structure reference. AI then fills in the environmental detail based on the framing, angle and intended mood of each shot.

“Either way, the goal is the same: AI is generating a world, but the design must still come from us,” Shaw says.

This article appears in the April/May 2026 issue of Definition